Your XML sitemap is the roadmap search engines use to discover every important page on your website. A broken, outdated, or misconfigured sitemap means Google may never find, or stop indexing, pages that drive your organic traffic. Yet most site owners never validate their sitemaps after the initial setup.

The Sorank Sitemap Checker analyzes your XML sitemap in seconds, flagging structural errors, broken URLs, and indexing issues so you can fix them before they impact your rankings.

Why XML Sitemaps Matter for SEO

An XML sitemap serves as a direct communication channel between your website and search engine crawlers. While Google can discover pages through links, a sitemap ensures that every page you consider important is explicitly listed for crawling. This is particularly critical in several scenarios:

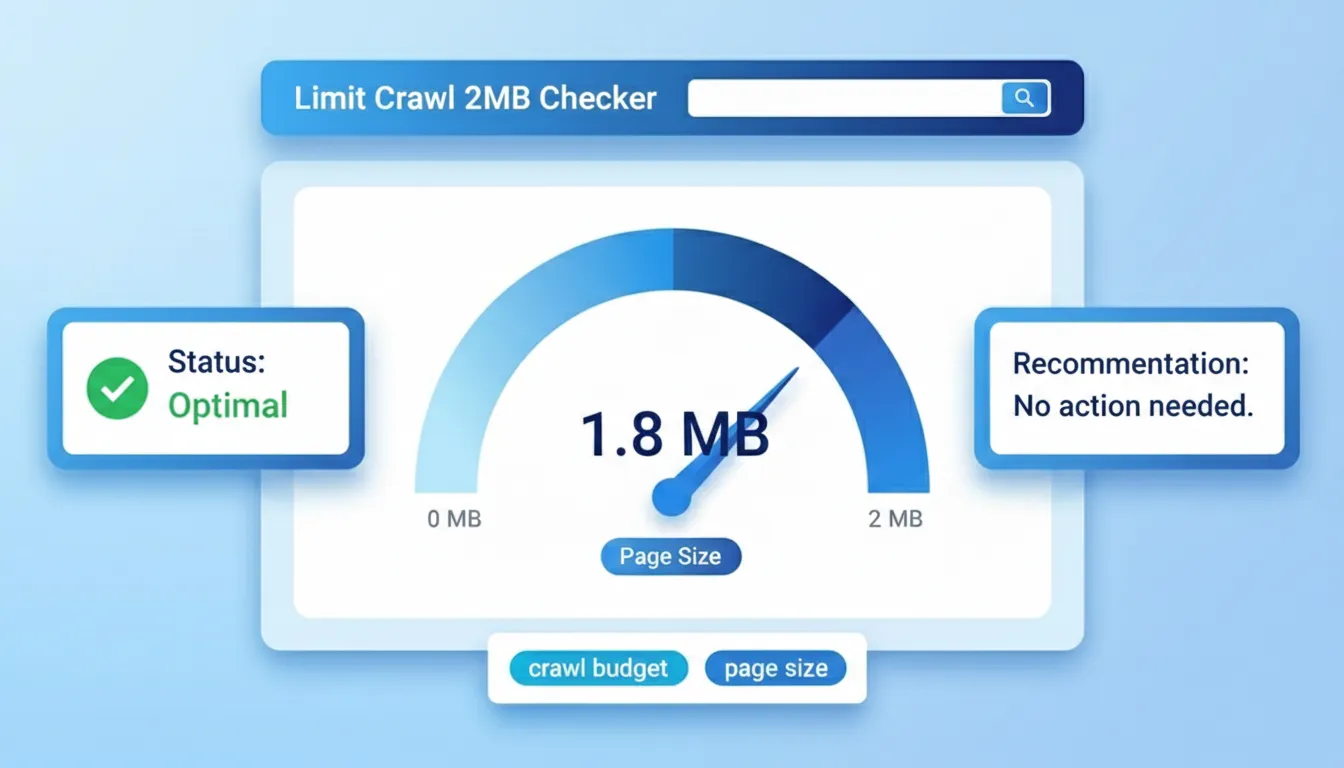

- Large websites: Sites with thousands of pages often have crawl budget limitations. A clean sitemap helps search engines prioritize which pages to crawl and how frequently.

- New websites: Fresh domains with few inbound links rely heavily on sitemaps for initial discovery. Without one, it can take weeks or months for Google to find all your pages.

- Complex site architectures: JavaScript-rendered pages, faceted navigation, and deep category structures create crawling challenges that a well-structured sitemap resolves.

- Content updates: The lastmod tag in your sitemap signals to Google when content has changed, prompting faster re-crawling of updated pages.

- Orphan pages: Pages with no internal links pointing to them are invisible to crawlers unless listed in your sitemap.

Common Sitemap Errors and Their Impact

Even popular CMS platforms generate sitemaps with issues that can silently harm your SEO. Here are the most frequent problems the checker identifies:

- Non-200 status codes: URLs returning 301 redirects, 404 errors, or 500 server errors waste crawl budget and signal poor site maintenance to Google.

- URLs blocked by robots.txt: Including URLs in your sitemap that your robots.txt file blocks creates a conflicting signal that confuses search engines.

- Missing or invalid XML formatting: A single malformed tag can cause search engines to reject your entire sitemap, leaving all listed URLs undiscoverable.

- Exceeding size limits: Google accepts sitemaps with a maximum of 50,000 URLs and 50MB uncompressed. Larger sites need a sitemap index file.

- Duplicate URLs: Listing the same URL multiple times, or including both www and non-www versions, dilutes crawl signals and can cause indexing confusion.

- Missing lastmod dates: Without accurate modification dates, search engines cannot efficiently prioritize which pages to recrawl.

How the Sitemap Checker Works

The tool performs a comprehensive validation process in three stages:

- Fetch and parse: The checker retrieves your sitemap URL, handles sitemap index files with multiple sub-sitemaps, and validates the XML structure against the official sitemap protocol.

- URL validation: Each URL in the sitemap is checked for proper formatting, duplicate entries, and protocol consistency (HTTP vs HTTPS).

- Status analysis: The tool provides a summary of all URLs found, their structure, and any issues detected, giving you a clear overview of your sitemap health.

Best Practices for XML Sitemaps

Follow these guidelines to maintain a healthy sitemap that maximizes your crawling efficiency:

- Only include canonical URLs: Every URL in your sitemap should return a 200 status code and be the canonical version of that page. Never include redirects, noindex pages, or non-canonical duplicates.

- Keep lastmod accurate: Only update the lastmod date when the page content actually changes. Artificially updating dates erodes Google's trust in your sitemap signals.

- Use sitemap index files for large sites: Split sitemaps by content type (posts, pages, products, categories) using a sitemap index. This makes debugging easier and keeps individual files manageable.

- Submit to Google Search Console: While Google can discover sitemaps via robots.txt, manually submitting through Search Console gives you indexing statistics and error reports.

- Automate generation: Use your CMS or a server-side script to generate sitemaps dynamically rather than maintaining them manually. Manual sitemaps inevitably become outdated.

- Validate after every major change: Site migrations, URL restructuring, and CMS updates are common causes of sitemap breakage. Always validate after significant changes.

Sitemap Checker vs Google Search Console

Google Search Console reports sitemap errors, but with significant delays, often days or weeks after submission. The Sorank Sitemap Checker provides instant validation, letting you catch and fix issues before submitting to Google. Use both tools together: validate with our checker first, then submit the clean sitemap to Search Console for ongoing monitoring.

.svg)

.svg)