Privacy is important to us, so you have the option of disabling certain types of storage that may not be necessary for the basic functioning of the website. Blocking categories may impact your experience on the website. More information

Learn how to install the llms.txt file on your site. A guide to improve your site's ranking in AI systems.

With the rise of large language models (LLMs) and the proliferation of AI use cases, new standards are emerging to make information on websites easier to access. Among them is the llms.txt file, an AI-first equivalent inspired by robots.txt and sitemap.xml, but designed specifically to help LLMs (Large Language Models) and their tools (for example ChatGPT, Claude, Cursor, Windsurf, Replit Ghostwriter, etc.) better understand and use your content.

In this mega guide, you will discover:

llms.txtllms.txt and why use it?The llms.txt file is a text file written in Markdown (even though it keeps the .txt extension) placed at the root of a website, like robots.txt. Its goal is to guide AIs directly during the inference phase (when a user or a conversational agent is looking up specific information in real time) by providing:

In other words, llms.txt becomes a catalyst that points AIs toward the essential content and prevents them from crudely, or too heavily, parsing traditional HTML pages full of design elements, animations, and ads.

Thus, llms.txt streamlines the way AIs get a site overview, enabling better use during the inference phase (code suggestions, expert answers, ChatGPT plugins, etc.).

llms.txt, robots.txt, and sitemap.xml?robots.txt: tells bots (GoogleBot, BingBot, etc.) where they can or cannot crawl. It does not provide any content, only access rules.sitemap.xml: lists all indexable pages for search engines (URL, last update date, priorities). Very useful for SEO, but it does not provide a description of content or mention an "AI-friendly" form of the pages.llms.txt: a Markdown file aimed at AIs to describe or point to pages used during inference. It can also include strategic excerpts, foundational external links, and even .md versions of your pages. It's an opt-in tool designed to serve agents directly. It complements, but does not replace, robots.txt or sitemap.xml.The llms.txt file is meant to be simple and flexible. Here is the proposed structure:

Note: URLs may end in .md if you want to provide the text/Markdown version of your pages directly.

In the FastHTML documentation, there is an llms.txt (demonstration file) that points to:

llms-full.txt with its complete documentation. This makes it easier to use in IDEs or chatbots (e.g., Cursor) that load this file directly.llms.txt to describe its services.llms.txt and llms-full.txt to allow loading the documentation into a conversational agent.Even though llms.txt is not aimed directly at traditional search engines, it indirectly improves SEO:

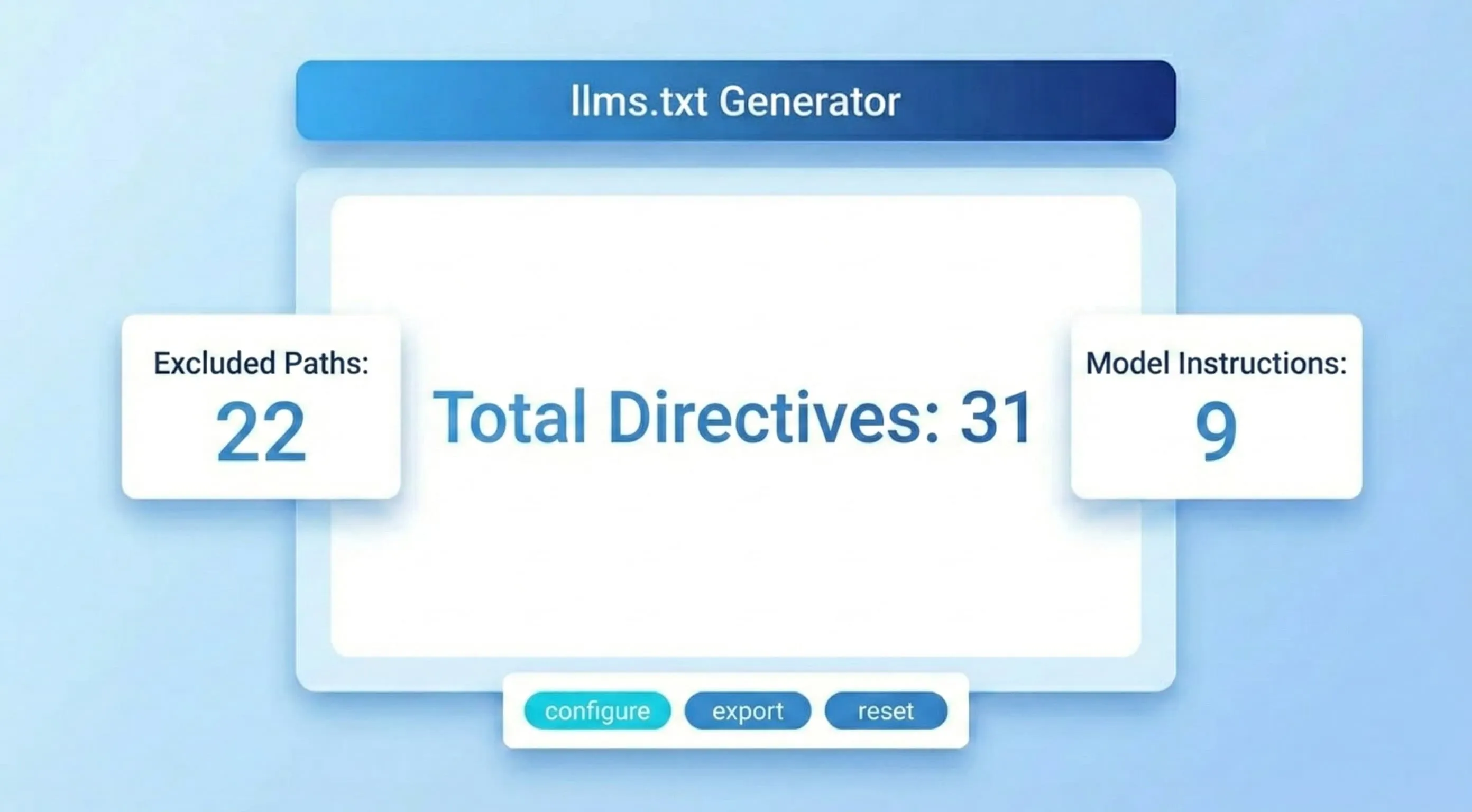

llms.txt?: brief overview).## headings, "optional" sections, etc.Several open-source projects and SaaS services offer to generate your llms.txt automatically:

llms.txt.llms.txt.LLMs.txt Explorer): to load or create an llms.txt from the editor.llms.txt can mislead AIs.llms.txt. If LLMs blindly rely on this file, they can "hallucinate" or spread false information./.well-known/llms.txt path to align with RFC 8615, while others prefer to use example.com/llms.txt directly.llms.txt to boost your AI SEO?llms.txt is not mandatory, but it is gaining popularity among smart IDEs, AI plugins, and open-source communities. It simplifies integrating content into AI projects in real time, avoids wasting tokens, and helps documentation be better understood by language models.

The llms.txt file is establishing itself as a new cornerstone of the SEO and AI toolkit. By providing a hierarchical condensation of your key content, it makes contextual search by conversational agents easier and highlights your technical documentation. As chatbots and smart IDEs become the "new gateway" to information, adopting llms.txt can make a difference.

Don't wait to put it in place! Take advantage today of the synergy between your traditional SEO and this new AI layer to deliver the best possible experience to users… humans and artificial intelligences.

No, most AIs can already “scrape” the web. However, llms.txt streamlines and makes the context provided at inference more reliable. It is particularly useful for customer support, code auto-completion, technical documentation, etc.

No, they are two different things. robots.txt is mainly used to control crawler access. llms.txt is aimed at AIs during the information-seeking (inference) phase and offers a concise format, leveraging Markdown versions of your resources.

llms.txt is an optional standard. Not creating one is equivalent to not offering this privileged bridge to AIs. And if you want to block all usage, you should configure your robots.txt or implement technical measures (block user agents, etc.). But nothing guarantees that all LLMs or scrapers will respect these instructions.

See how businesses like yours multiplied their organic traffic in months with Sorank.